Mirror of the ArchivalBox software source repository. Useful for storing snapshots of web pages and their resources for offline viewing or preservation.

#archival #utilities #python #webpage #web-archival #web-snapshot #storage

|

|

1 year ago | |

|---|---|---|

| .github | 2 years ago | |

| archivebox | 2 years ago | |

| bin | 1 year ago | |

| brew_dist @ ec64946796 | 2 years ago | |

| deb_dist @ 92f8fe8f34 | 2 years ago | |

| docker @ 1fc6dd7f0f | 2 years ago | |

| docs @ a1b69c51ba | 2 years ago | |

| etc | 2 years ago | |

| pip_dist @ 5323fc773d | 2 years ago | |

| tests | 2 years ago | |

| website | 2 years ago | |

| .dockerignore | 2 years ago | |

| .gitignore | 2 years ago | |

| .gitmodules | 4 years ago | |

| .npmignore | 5 years ago | |

| .readthedocs.yaml | 2 years ago | |

| Dockerfile | 2 years ago | |

| LICENSE | 5 years ago | |

| README.md | 2 years ago | |

| SECURITY.md | 2 years ago | |

| docker-compose.yml | 1 year ago | |

| package-lock.json | 1 year ago | |

| package.json | 1 year ago | |

| pdm.lock | 1 year ago | |

| pyproject.toml | 1 year ago | |

| requirements.txt | 1 year ago |

README.md

ArchiveBox

Open-source self-hosted web archiving.

▶️ Quickstart | Demo | GitHub | Documentation | Info & Motivation | Community

ArchiveBox is a powerful, self-hosted internet archiving solution to collect, save, and view websites offline.

Without active preservation effort, everything on the internet eventually dissapears or degrades. Archive.org does a great job as a free central archive, but they require all archives to be public, and they can't save every type of content.

ArchiveBox is an open source tool that helps you archive web content on your own (or privately within an organization): save copies of browser bookmarks, preserve evidence for legal cases, backup photos from FB / Insta / Flickr, download your media from YT / Soundcloud / etc., snapshot research papers & academic citations, and more...

➡️ Use ArchiveBox as a command-line package and/or self-hosted web app on Linux, macOS, or in Docker.

📥 You can feed ArchiveBox URLs one at a time, or schedule regular imports from browser bookmarks or history, feeds like RSS, bookmark services like Pocket/Pinboard, and more. See input formats for a full list.

💾 It saves snapshots of the URLs you feed it in several redundant formats.

It also detects any content featured inside each webpage & extracts it out into a folder:

HTML/Generic websites -> HTML, PDF, PNG, WARC, SinglefileYouTube/SoundCloud/etc. -> MP3/MP4 + subtitles, description, thumbnailNews articles -> article body TXT + title, author, featured imagesGithub/Gitlab/etc. links -> git cloned source code- and more...

It uses normal filesystem folders to organize archives (no complicated proprietary formats), and offers a CLI + web UI.

🏛️ ArchiveBox is used by many professionals and hobbyists who save content off the web, for example:

- Individuals:

backing up browser bookmarks/history,saving FB/Insta/etc. content,shopping lists - Journalists:

crawling and collecting research,preserving quoted material,fact-checking and review - Lawyers:

evidence collection,hashing & integrity verifying,search, tagging, & review - Researchers:

collecting AI training sets,feeding analysis / web crawling pipelines

The goal is to sleep soundly knowing the part of the internet you care about will be automatically preserved in durable, easily accessible formats for decades after it goes down.

📦 Get ArchiveBox with docker / apt / brew / pip3 / nix / etc. (see Quickstart below).

# Get ArchiveBox with Docker or Docker Compose (recommended)

docker run -v $PWD/data:/data -it archivebox/archivebox:dev init --setup

# Or install with your preferred package manager (see Quickstart below for apt, brew, and more)

pip3 install archivebox

# Or use the optional auto setup script to install it

curl -sSL 'https://get.archivebox.io' | sh

🔢 Example usage: adding links to archive.

archivebox add 'https://example.com' # add URLs one at a time

archivebox add < ~/Downloads/bookmarks.json # or pipe in URLs in any text-based format

archivebox schedule --every=day --depth=1 https://example.com/rss.xml # or auto-import URLs regularly on a schedule

🔢 Example usage: viewing the archived content.

archivebox server 0.0.0.0:8000 # use the interactive web UI

archivebox list 'https://example.com' # use the CLI commands (--help for more)

ls ./archive/*/index.json # or browse directly via the filesystem

Key Features

- Free & open source, doesn't require signing up online, stores all data locally

- Powerful, intuitive command line interface with modular optional dependencies

- Comprehensive documentation, active development, and rich community

- Extracts a wide variety of content out-of-the-box: media (yt-dlp), articles (readability), code (git), etc.

- Supports scheduled/realtime importing from many types of sources

- Uses standard, durable, long-term formats like HTML, JSON, PDF, PNG, MP4, TXT, and WARC

- Usable as a oneshot CLI, self-hosted web UI, Python API (BETA), REST API (ALPHA), or desktop app (ALPHA)

- Saves all pages to archive.org as well by default for redundancy (can be disabled for local-only mode)

- Advanced users: support for archiving content requiring login/paywall/cookies (see wiki security caveats!)

- Planned: support for running JS during archiving to adblock, autoscroll, modal-hide, thread-expand

🤝 Professional Integration

Contact us if your non-profit institution/org wants to use ArchiveBox professionally.

- setup & support, team permissioning, hashing, audit logging, backups, custom archiving etc.

- for individuals, NGOs, academia, governments, journalism, law, and more...

All our work is open-source and primarily geared towards non-profits.

Support/consulting pays for hosting and funds new ArchiveBox open-source development.

Quickstart

🖥 Supported OSs: Linux/BSD, macOS, Windows (Docker) 👾 CPUs: amd64 (x86_64), arm64 (arm8), arm7 (raspi>=3)

Note: On arm7 the playwright package is not available, so chromium must be installed manually if needed.

✳️ Easy Setup

docker-compose (macOS/Linux/Windows) 👈 recommended (click to expand)

👍 Docker Compose is recommended for the easiest install/update UX + best security + all the extras out-of-the-box.

- Install Docker and Docker Compose on your system (if not already installed).

- Download the

docker-compose.ymlfile into a new empty directory (can be anywhere).mkdir ~/archivebox && cd ~/archivebox curl -O 'https://raw.githubusercontent.com/ArchiveBox/ArchiveBox/dev/docker-compose.yml' - Run the initial setup and create an admin user.

docker compose run archivebox init --setup - Optional: Start the server then login to the Web UI http://127.0.0.1:8000 ⇢ Admin.

docker compose up # completely optional, CLI can always be used without running a server # docker compose run [-T] archivebox [subcommand] [--args]

docker run (macOS/Linux/Windows)

- Install Docker on your system (if not already installed).

- Create a new empty directory and initialize your collection (can be anywhere).

mkdir ~/archivebox && cd ~/archivebox docker run -v $PWD:/data -it archivebox/archivebox init --setup - Optional: Start the server then login to the Web UI http://127.0.0.1:8000 ⇢ Admin.

docker run -v $PWD:/data -p 8000:8000 archivebox/archivebox # completely optional, CLI can always be used without running a server # docker run -v $PWD:/data -it [subcommand] [--args]

bash auto-setup script (macOS/Linux)

- Install Docker on your system (optional, highly recommended but not required).

- Run the automatic setup script.

curl -sSL 'https://get.archivebox.io' | sh

See

setup.sh for the source code of the auto-install script.See "Against curl | sh as an install method" blog post for my thoughts on the shortcomings of this install method.

🛠 Package Manager Setup

apt (Ubuntu/Debian)

echo "deb http://ppa.launchpad.net/archivebox/archivebox/ubuntu focal main" | sudo tee /etc/apt/sources.list.d/archivebox.list

sudo apt-key adv --keyserver keyserver.ubuntu.com --recv-keys C258F79DCC02E369

sudo apt update

apt.

sudo apt install archivebox

sudo python3 -m pip install --upgrade --ignore-installed archivebox # pip needed because apt only provides a broken older version of Django

Note: If you encounter issues with NPM/NodeJS, install a more recent version.mkdir ~/archivebox && cd ~/archivebox

archivebox init --setup # if any problems, install with pip instead

archivebox server 0.0.0.0:8000

completely optional, CLI can always be used without running a server

archivebox [subcommand] [--args]

See below for more usage examples using the CLI, Web UI, or filesystem/SQL/Python to manage your archive.

See the debian-archivebox repo for more details about this distribution.

brew (macOS)

- Install Homebrew on your system (if not already installed).

- Install the ArchiveBox package using

brew.brew tap archivebox/archivebox brew install archivebox - Create a new empty directory and initialize your collection (can be anywhere).

mkdir ~/archivebox && cd ~/archivebox archivebox init --setup # if any problems, install with pip instead - Optional: Start the server then login to the Web UI http://127.0.0.1:8000 ⇢ Admin.

archivebox server 0.0.0.0:8000 # completely optional, CLI can always be used without running a server # archivebox [subcommand] [--args]

See the

homebrew-archivebox repo for more details about this distribution.

pip (macOS/Linux/BSD)

- Install Python >= v3.9 and Node >= v18 on your system (if not already installed).

- Install the ArchiveBox package using

pip3.pip3 install archivebox - Create a new empty directory and initialize your collection (can be anywhere).

mkdir ~/archivebox && cd ~/archivebox archivebox init --setup # install any missing extras like wget/git/ripgrep/etc. manually as needed - Optional: Start the server then login to the Web UI http://127.0.0.1:8000 ⇢ Admin.

archivebox server 0.0.0.0:8000 # completely optional, CLI can always be used without running a server # archivebox [subcommand] [--args]

See the

pip-archivebox repo for more details about this distribution.

pacman /

pkg /

nix (Arch/FreeBSD/NixOS/more)

> [!WARNING] > *These are contributed by external volunteers and may lag behind the official `pip` channel.*

- Arch:

yay -S archivebox(contributed by@imlonghao) - FreeBSD:

curl -sSL 'https://get.archivebox.io' | sh(usespkg+pip3under-the-hood) - Nix:

nix-env --install archivebox(contributed by@siraben) - More: contribute another distribution...!

🎗 Other Options

docker +

electron Desktop App (macOS/Linux/Windows)

- Install Docker on your system (if not already installed).

- Download a binary release for your OS or build the native app from source

- macOS:

ArchiveBox.app.zip - Linux:

ArchiveBox.deb(alpha: build manually) - Windows:

ArchiveBox.exe(beta: build manually)

- macOS:

✨ Alpha (contributors wanted!): for more info, see the: Electron ArchiveBox repo.

Paid hosting solutions (cloud VPS)

Paid hosting solutions (cloud VPS)

-

(get hosting, support, and feature customization directy from us)

-

(for a generalist software consultancy that helps with ArchiveBox maintainance)

(USD $29-250/mo, pricing)

(from USD $2.6/mo)

-

(USD $5-50+/mo, 🎗 referral link, instructions)

-

(USD $2.5-50+/mo, 🎗 referral link, instructions)

-

(USD $10-50+/mo, instructions)

(USD $60-200+/mo)

(USD $60-200+/mo)

Other providers of paid ArchiveBox hosting (not officially endorsed):

Referral links marked 🎗 provide $5-10 of free credit for new users and help pay for our demo server hosting costs.

➡️ Next Steps

- Import URLs from some of the supported Input Formats or view the supported Output Formats...

- Tweak your UI or archiving behavior Configuration or read about some of the Caveats and troubleshooting steps...

- Read about the Dependencies used for archiving, the Upgrading Process, or the Archive Layout on disk...

- Or check out our full Documentation or Community Wiki...

Usage

⚡️ CLI Usage

# archivebox [subcommand] [--args]

# docker-compose run archivebox [subcommand] [--args]

# docker run -v $PWD:/data -it [subcommand] [--args]

archivebox init --setup # safe to run init multiple times (also how you update versions)

archivebox --version

archivebox help

archivebox setup/init/config/status/manageto administer your collectionarchivebox add/schedule/remove/update/list/shell/oneshotto manage Snapshots in the archivearchivebox scheduleto pull in fresh URLs regularly from bookmarks/history/Pocket/Pinboard/RSS/etc.

🖥 Web UI Usage

archivebox manage createsuperuser # set an admin password

archivebox server 0.0.0.0:8000 # open http://127.0.0.1:8000 to view it

# you can also configure whether or not login is required for most features

archivebox config --set PUBLIC_INDEX=False

archivebox config --set PUBLIC_SNAPSHOTS=False

archivebox config --set PUBLIC_ADD_VIEW=False

🗄 SQL/Python/Filesystem Usage

sqlite3 ./index.sqlite3 # run SQL queries on your index

archivebox shell # explore the Python API in a REPL

ls ./archive/*/index.html # or inspect snapshots on the filesystem

DEMO:

https://demo.archivebox.ioUsage | Configuration | Caveats

Overview

Input Formats

ArchiveBox supports many input formats for URLs, including Pocket & Pinboard exports, Browser bookmarks, Browser history, plain text, HTML, markdown, and more!

Click these links for instructions on how to prepare your links from these sources:

TXT, RSS, XML, JSON, CSV, SQL, HTML, Markdown, or any other text-based format...

Browser history or browser bookmarks (see instructions for: Chrome, Firefox, Safari, IE, Opera, and more...)

Browser extension

archivebox-exporter(realtime archiving from Chrome/Chromium/Firefox)Pocket, Pinboard, Instapaper, Shaarli, Delicious, Reddit Saved, Wallabag, Unmark.it, OneTab, and more...

# archivebox add --help

archivebox add 'https://example.com/some/page'

archivebox add < ~/Downloads/firefox_bookmarks_export.html

archivebox add --depth=1 'https://news.ycombinator.com#2020-12-12'

echo 'http://example.com' | archivebox add

echo 'any_text_with [urls](https://example.com) in it' | archivebox add

# if using Docker, add -i when piping stdin:

# echo 'https://example.com' | docker run -v $PWD:/data -i archivebox/archivebox add

# if using Docker Compose, add -T when piping stdin / stdout:

# echo 'https://example.com' | docker compose run -T archivebox add

See the Usage: CLI page for documentation and examples.

It also includes a built-in scheduled import feature with archivebox schedule and browser bookmarklet, so you can pull in URLs from RSS feeds, websites, or the filesystem regularly/on-demand.

Output Formats

Inside each Snapshot folder, ArchiveBox saves these different types of extractor outputs as plain files:

./archive/<timestamp>/*

- Index:

index.html&index.jsonHTML and JSON index files containing metadata and details - Title, Favicon, Headers Response headers, site favicon, and parsed site title

- SingleFile:

singlefile.htmlHTML snapshot rendered with headless Chrome using SingleFile - Wget Clone:

example.com/page-name.htmlwget clone of the site withwarc/<timestamp>.gz - Chrome Headless

- PDF:

output.pdfPrinted PDF of site using headless chrome - Screenshot:

screenshot.png1440x900 screenshot of site using headless chrome - DOM Dump:

output.htmlDOM Dump of the HTML after rendering using headless chrome

- PDF:

- Article Text:

article.html/jsonArticle text extraction using Readability & Mercury - Archive.org Permalink:

archive.org.txtA link to the saved site on archive.org - Audio & Video:

media/all audio/video files + playlists, including subtitles & metadata with youtube-dl (or yt-dlp) - Source Code:

git/clone of any repository found on GitHub, Bitbucket, or GitLab links - More coming soon! See the Roadmap...

It does everything out-of-the-box by default, but you can disable or tweak individual archive methods via environment variables / config.

Configuration

ArchiveBox can be configured via environment variables, by using the archivebox config CLI, or by editing the ArchiveBox.conf config file directly.

archivebox config # view the entire config

archivebox config --get CHROME_BINARY # view a specific value

archivebox config --set CHROME_BINARY=chromium # persist a config using CLI

# OR

echo CHROME_BINARY=chromium >> ArchiveBox.conf # persist a config using file

# OR

env CHROME_BINARY=chromium archivebox ... # run with a one-off config

These methods also work the same way when run inside Docker, see the Docker Configuration wiki page for details.

The config loading logic with all the options defined is here: archivebox/config.py.

Most options are also documented on the Configuration Wiki page.

Most Common Options to Tweak

# e.g. archivebox config --set TIMEOUT=120

TIMEOUT=120 # default: 60 add more seconds on slower networks

CHECK_SSL_VALIDITY=True # default: False True = allow saving URLs w/ bad SSL

SAVE_ARCHIVE_DOT_ORG=False # default: True False = disable Archive.org saving

MAX_MEDIA_SIZE=1500m # default: 750m raise/lower youtubedl output size

PUBLIC_INDEX=True # default: True whether anon users can view index

PUBLIC_SNAPSHOTS=True # default: True whether anon users can view pages

PUBLIC_ADD_VIEW=False # default: False whether anon users can add new URLs

CHROME_USER_AGENT="Mozilla/5.0 ..." # change these to get around bot blocking

WGET_USER_AGENT="Mozilla/5.0 ..."

CURL_USER_AGENT="Mozilla/5.0 ..."

Dependencies

To achieve high-fidelity archives in as many situations as possible, ArchiveBox depends on a variety of high-quality 3rd-party tools and libraries that specialize in extracting different types of content.

Expand to learn more about ArchiveBox's dependencies...

For better security, easier updating, and to avoid polluting your host system with extra dependencies, it is strongly recommended to use the official Docker image with everything pre-installed for the best experience.

These optional dependencies used for archiving sites include:

chromium/chrome(for screenshots, PDF, DOM HTML, and headless JS scripts)node&npm(for readability, mercury, and singlefile)wget(for plain HTML, static files, and WARC saving)curl(for fetching headers, favicon, and posting to Archive.org)youtube-dloryt-dlp(for audio, video, and subtitles)git(for cloning git repos)- and more as we grow...

You don't need to install every dependency to use ArchiveBox. ArchiveBox will automatically disable extractors that rely on dependencies that aren't installed, based on what is configured and available in your $PATH.

If not using Docker, make sure to keep the dependencies up-to-date yourself and check that ArchiveBox isn't reporting any incompatibility with the versions you install.

# install python3 and archivebox with your system package manager

# apt/brew/pip/etc install ... (see Quickstart instructions above)

archivebox setup # auto install all the extractors and extras

archivebox --version # see info and check validity of installed dependencies

Installing directly on Windows without Docker or WSL/WSL2/Cygwin is not officially supported (I cannot respond to Windows support tickets), but some advanced users have reported getting it working.

Learn More

- https://github.com/ArchiveBox/ArchiveBox/wiki/Install#dependencies

- https://github.com/ArchiveBox/ArchiveBox/wiki/Chromium-Install

- https://github.com/ArchiveBox/ArchiveBox/wiki/Upgrading-or-Merging-Archives

- https://github.com/ArchiveBox/ArchiveBox/wiki/Troubleshooting#installing

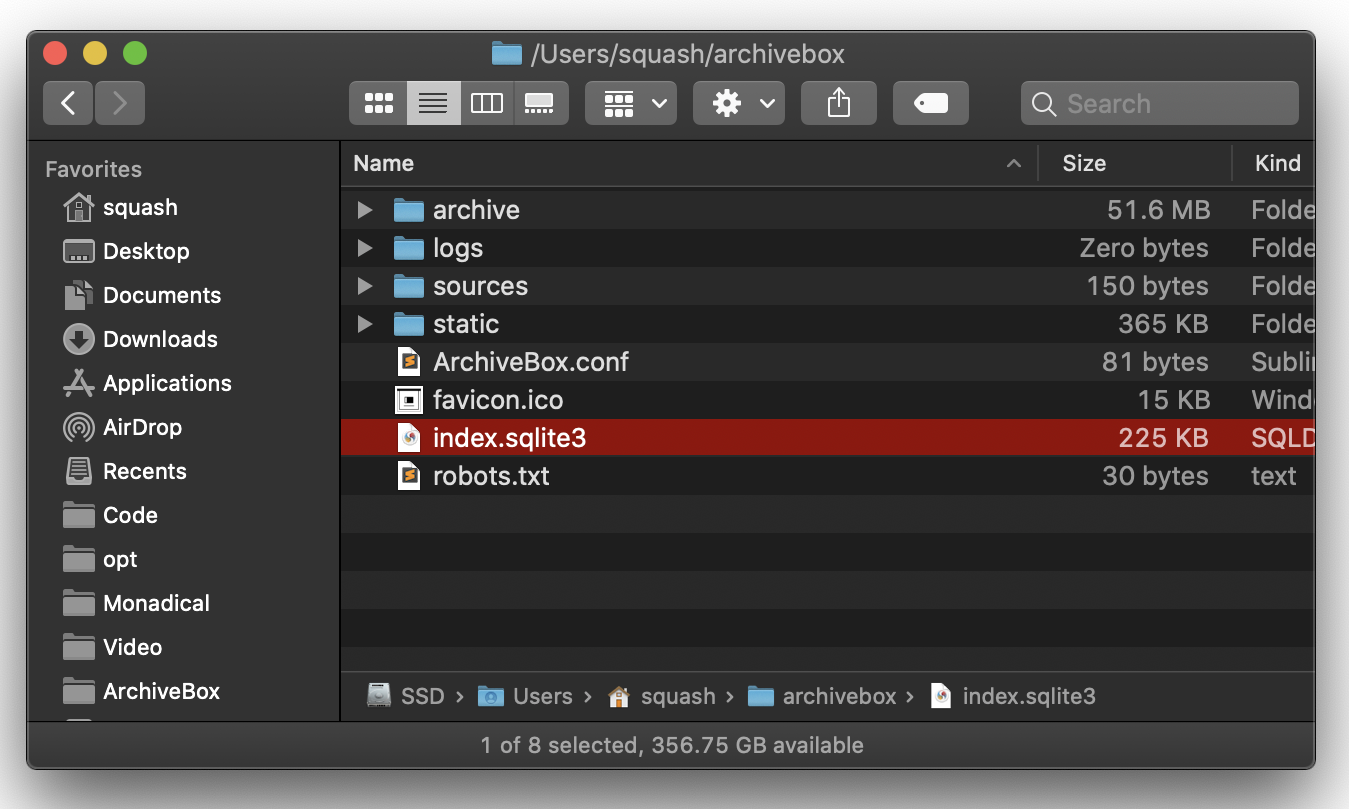

Archive Layout

All of ArchiveBox's state (including the SQLite DB, archived assets, config, logs, etc.) is stored in a single folder called the "ArchiveBox Data Folder".

Data folders can be created anywhere (~/archivebox or $PWD/data as seen in our examples), and you can create more than one for different collections.

Expand to learn more about the layout of Archivebox's data on-disk...

All archivebox CLI commands are designed to be run from inside an ArchiveBox data folder, starting with archivebox init to initialize a new collection inside an empty directory.

mkdir ~/archivebox && cd ~/archivebox # just an example, can be anywhere

archivebox init

The on-disk layout is optimized to be easy to browse by hand and durable long-term. The main index is a standard index.sqlite3 database in the root of the data folder (it can also be exported as static JSON/HTML), and the archive snapshots are organized by date-added timestamp in the ./archive/ subfolder.

/data/

index.sqlite3

ArchiveBox.conf

archive/

...

1617687755/

index.html

index.json

screenshot.png

media/some_video.mp4

warc/1617687755.warc.gz

git/somerepo.git

...

Each snapshot subfolder ./archive/<timestamp>/ includes a static index.json and index.html describing its contents, and the snapshot extractor outputs are plain files within the folder.

Learn More

- https://github.com/ArchiveBox/ArchiveBox/wiki/Usage#Disk-Layout

- https://github.com/ArchiveBox/ArchiveBox/wiki/Usage#large-archives

- https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview#output-folder

- https://github.com/ArchiveBox/ArchiveBox/wiki/Publishing-Your-Archive

- https://github.com/ArchiveBox/ArchiveBox/wiki/Upgrading-or-Merging-Archives

Static Archive Exporting

You can export the main index to browse it statically as plain HTML files in a folder (without needing to run a server).

Expand to learn how to export your ArchiveBox collection...

Note These exports are not paginated, exporting many URLs or the entire archive at once may be slow. Use the filtering CLI flags on the

archivebox listcommand to export specific Snapshots or ranges.

# archivebox list --help

archivebox list --html --with-headers > index.html # export to static html table

archivebox list --json --with-headers > index.json # export to json blob

archivebox list --csv=timestamp,url,title > index.csv # export to csv spreadsheet

# (if using Docker Compose, add the -T flag when piping)

# docker compose run -T archivebox list --html --filter-type=search snozzberries > index.json

The paths in the static exports are relative, make sure to keep them next to your ./archive folder when backing them up or viewing them.

Learn More

- https://github.com/ArchiveBox/ArchiveBox/wiki/Publishing-Your-Archive#2-export-and-host-it-as-static-html

- https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview#publishing

- https://github.com/ArchiveBox/ArchiveBox/wiki/Configuration#public_index--public_snapshots--public_add_view

Caveats

Archiving Private Content

If you're importing pages with private content or URLs containing secret tokens you don't want public (e.g Google Docs, paywalled content, unlisted videos, etc.), you may want to disable some of the extractor methods to avoid leaking that content to 3rd party APIs or the public.

Click to expand...

# don't save private content to ArchiveBox, e.g.:

archivebox add 'https://docs.google.com/document/d/12345somePrivateDocument'

archivebox add 'https://vimeo.com/somePrivateVideo'

# without first disabling saving to Archive.org:

archivebox config --set SAVE_ARCHIVE_DOT_ORG=False # disable saving all URLs in Archive.org

# restrict the main index, Snapshot content, and Add Page to authenticated users as-needed:

archivebox config --set PUBLIC_INDEX=False

archivebox config --set PUBLIC_SNAPSHOTS=False

archivebox config --set PUBLIC_ADD_VIEW=False

# if extra paranoid or anti-Google:

archivebox config --set SAVE_FAVICON=False # disable favicon fetching (it calls a Google API passing the URL's domain part only)

archivebox config --set CHROME_BINARY=chromium # ensure it's using Chromium instead of Chrome

Learn More

- https://github.com/ArchiveBox/ArchiveBox/wiki/Publishing-Your-Archive

- https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview

- https://github.com/ArchiveBox/ArchiveBox/wiki/Chromium-Install#setting-up-a-chromium-user-profile

- https://github.com/ArchiveBox/ArchiveBox/wiki/Configuration#chrome_user_data_dir

- https://github.com/ArchiveBox/ArchiveBox/wiki/Configuration#cookies_file

Security Risks of Viewing Archived JS

Be aware that malicious archived JS can access the contents of other pages in your archive when viewed. Because the Web UI serves all viewed snapshots from a single domain, they share a request context and typical CSRF/CORS/XSS/CSP protections do not work to prevent cross-site request attacks. See the Security Overview page and Issue #239 for more details.

Click to expand...

# visiting an archived page with malicious JS:

https://127.0.0.1:8000/archive/1602401954/example.com/index.html

# example.com/index.js can now make a request to read everything from:

https://127.0.0.1:8000/index.html

https://127.0.0.1:8000/archive/*

# then example.com/index.js can send it off to some evil server

The admin UI is also served from the same origin as replayed JS, so malicious pages could also potentially use your ArchiveBox login cookies to perform admin actions (e.g. adding/removing links, running extractors, etc.). We are planning to fix this security shortcoming in a future version by using separate ports/origins to serve the Admin UI and archived content (see Issue #239).

Note: Only the wget & dom extractor methods execute archived JS when viewing snapshots, all other archive methods produce static output that does not execute JS on viewing. If you are worried about these issues ^ you should disable these extractors using archivebox config --set SAVE_WGET=False SAVE_DOM=False.

Learn More

- https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview

- https://github.com/ArchiveBox/ArchiveBox/issues/239

- https://github.com/ArchiveBox/ArchiveBox/security/advisories/GHSA-cr45-98w9-gwqx (

CVE-2023-45815) - https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview#publishing

Working Around Sites that Block Archiving

For various reasons, many large sites (Reddit, Twitter, Cloudflare, etc.) actively block archiving or bots in general. There are a number of approaches to work around this.

Click to expand...

- Set

CHROME_USER_AGENT,WGET_USER_AGENT,CURL_USER_AGENTto impersonate a real browser (instead of an ArchiveBox bot) - Set up a logged-in browser session for archiving using

CHROME_DATA_DIR&COOKIES_FILE - Rewrite your URLs before archiving to swap in an alternative frontend thats more bot-friendly e.g.

reddit.com/some/url->teddit.net/some/url: https://github.com/mendel5/alternative-front-ends

In the future we plan on adding support for running JS scripts during archiving to block ads, cookie popups, modals, and fix other issues. Follow here for progress: Issue #51.

Saving Multiple Snapshots of a Single URL

ArchiveBox appends a hash with the current date https://example.com#2020-10-24 to differentiate when a single URL is archived multiple times.

Click to expand...

Because ArchiveBox uniquely identifies snapshots by URL, it must use a workaround to take multiple snapshots of the same URL (otherwise they would show up as a single Snapshot entry). It makes the URLs of repeated snapshots unique by adding a hash with the archive date at the end:

archivebox add 'https://example.com#2020-10-24'

...

archivebox add 'https://example.com#2020-10-25'

The  button in the Admin UI is a shortcut for this hash-date multi-snapshotting workaround.

button in the Admin UI is a shortcut for this hash-date multi-snapshotting workaround.

Improved support for saving multiple snapshots of a single URL without this hash-date workaround will be added eventually (along with the ability to view diffs of the changes between runs).

Learn More

- https://github.com/ArchiveBox/ArchiveBox/issues/179

- https://github.com/ArchiveBox/ArchiveBox/wiki/Usage#explanation-of-buttons-in-the-web-ui---admin-snapshots-list

Storage Requirements

Because ArchiveBox is designed to ingest a large volume of URLs with multiple copies of each URL stored by different 3rd-party tools, it can be quite disk-space intensive.

There also also some special requirements when using filesystems like NFS/SMB/FUSE.

Click to expand...

ArchiveBox can use anywhere from ~1gb per 1000 articles, to ~50gb per 1000 articles, mostly dependent on whether you're saving audio & video using SAVE_MEDIA=True and whether you lower MEDIA_MAX_SIZE=750mb.

Disk usage can be reduced by using a compressed/deduplicated filesystem like ZFS/BTRFS, or by turning off extractors methods you don't need. You can also deduplicate content with a tool like fdupes or rdfind. Don't store large collections on older filesystems like EXT3/FAT as they may not be able to handle more than 50k directory entries in the archive/ folder. Try to keep the index.sqlite3 file on local drive (not a network mount) or SSD for maximum performance, however the archive/ folder can be on a network mount or slower HDD.

If using Docker or NFS/SMB/FUSE for the data/archive/ folder, you may need to set PUID & PGID and disable root_squash on your fileshare server.

Learn More

- https://github.com/ArchiveBox/ArchiveBox/wiki/Usage#Disk-Layout

- https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview#output-folder

- https://github.com/ArchiveBox/ArchiveBox/wiki/Usage#large-archives

- https://github.com/ArchiveBox/ArchiveBox/wiki/Configuration#puid--pgid

- https://github.com/ArchiveBox/ArchiveBox/wiki/Security-Overview#do-not-run-as-root

Screenshots

---

Background & Motivation

ArchiveBox aims to enable more of the internet to be saved from deterioration by empowering people to self-host their own archives. The intent is for all the web content you care about to be viewable with common software in 50 - 100 years without needing to run ArchiveBox or other specialized software to replay it.

Click to read more...

Vast treasure troves of knowledge are lost every day on the internet to link rot. As a society, we have an imperative to preserve some important parts of that treasure, just like we preserve our books, paintings, and music in physical libraries long after the originals go out of print or fade into obscurity.

Whether it's to resist censorship by saving articles before they get taken down or edited, or just to save a collection of early 2010's flash games you love to play, having the tools to archive internet content enables to you save the stuff you care most about before it disappears.

The balance between the permanence and ephemeral nature of content on the internet is part of what makes it beautiful. I don't think everything should be preserved in an automated fashion--making all content permanent and never removable, but I do think people should be able to decide for themselves and effectively archive specific content that they care about.

Because modern websites are complicated and often rely on dynamic content, ArchiveBox archives the sites in several different formats beyond what public archiving services like Archive.org/Archive.is save. Using multiple methods and the market-dominant browser to execute JS ensures we can save even the most complex, finicky websites in at least a few high-quality, long-term data formats.

Comparison to Other Projects

[!TIP] Check out our community page for an index of web archiving initiatives and projects.

A variety of open and closed-source archiving projects exist, but few provide a nice UI and CLI to manage a large, high-fidelity archive collection over time.

ArchiveBox tries to be a robust, set-and-forget archiving solution suitable for archiving RSS feeds, bookmarks, or your entire browsing history (beware, it may be too big to store), including private/authenticated content that you wouldn't otherwise share with a centralized service (this is not recommended due to JS replay security concerns).

Comparison With Centralized Public Archives

Not all content is suitable to be archived in a centralized collection, whether because it's private, copyrighted, too large, or too complex. ArchiveBox hopes to fill that gap.

By having each user store their own content locally, we can save much larger portions of everyone's browsing history than a shared centralized service would be able to handle. The eventual goal is to work towards federated archiving where users can share portions of their collections with each other.

Comparison With Other Self-Hosted Archiving Options

ArchiveBox differentiates itself from similar self-hosted projects by providing both a comprehensive CLI interface for managing your archive, a Web UI that can be used either independently or together with the CLI, and a simple on-disk data format that can be used without either.

Click to see the ⭐️ officially recommended alternatives to ArchiveBox...

*If you want better fidelity for very complex interactive pages with heavy JS/streams/API requests, check out [ArchiveWeb.page](https://archiveweb.page) and [ReplayWeb.page](https://replayweb.page).* *If you want more bookmark categorization and note-taking features, check out [Archivy](https://archivy.github.io/), [Memex](https://github.com/WorldBrain/Memex), [Polar](https://getpolarized.io/), or [LinkAce](https://www.linkace.org/).* *If you need more advanced recursive spider/crawling ability beyond `--depth=1`, check out [Browsertrix](https://github.com/webrecorder/browsertrix-crawler), [Photon](https://github.com/s0md3v/Photon), or [Scrapy](https://scrapy.org/) and pipe the outputted URLs into ArchiveBox.* For more alternatives, see our [list here](https://github.com/ArchiveBox/ArchiveBox/wiki/Web-Archiving-Community#Web-Archiving-Projects)... ArchiveBox is neither the highest fidelity nor the simplest tool available for self-hosted archiving, rather it's a jack-of-all-trades that tries to do most things well by default. We encourage you to try these other tools made by our friends if ArchiveBox isn't suited to your needs.

Internet Archiving Ecosystem

Whether you want to learn which organizations are the big players in the web archiving space, want to find a specific open-source tool for your web archiving need, or just want to see where archivists hang out online, our Community Wiki page serves as an index of the broader web archiving community. Check it out to learn about some of the coolest web archiving projects and communities on the web!

- Community Wiki

- The Master Lists

Community-maintained indexes of archiving tools and institutions. - Web Archiving Software

Open source tools and projects in the internet archiving space. - Reading List

Articles, posts, and blogs relevant to ArchiveBox and web archiving in general. - Communities

A collection of the most active internet archiving communities and initiatives.

- The Master Lists

- Check out the ArchiveBox Roadmap and Changelog

- Learn why archiving the internet is important by reading the "On the Importance of Web Archiving" blog post.

- Reach out to me for questions and comments via @ArchiveBoxApp or @theSquashSH on Twitter

Need help building a custom archiving solution?

✨ Hire the team that built Archivebox to work on your project. (@ArchiveBoxApp)

(We also offer general software consulting across many industries)

Documentation

We use the GitHub wiki system and Read the Docs (WIP) for documentation.

You can also access the docs locally by looking in the ArchiveBox/docs/ folder.

Getting Started

Advanced

- Troubleshooting

- Scheduled Archiving

- Publishing Your Archive

- Chromium Install

- Cookies & Sessions Setup

- Security Overview

- Upgrading or Merging Archives

Developers

- Developer Documentation

- Python API (alpha)

- REST API (alpha)

More Info

ArchiveBox Development

All contributions to ArchiveBox are welcomed! Check our issues and Roadmap for things to work on, and please open an issue to discuss your proposed implementation before working on things! Otherwise we may have to close your PR if it doesn't align with our roadmap.

For low hanging fruit / easy first tickets, see: ArchiveBox/Issues #good first ticket #help wanted.

Python API Documentation: https://docs.archivebox.io/en/dev/archivebox.html#module-archivebox.main

Setup the dev environment

Click to expand...

#### 1. Clone the main code repo (making sure to pull the submodules as well) ```bash git clone --recurse-submodules https://github.com/ArchiveBox/ArchiveBox cd ArchiveBox git checkout dev # or the branch you want to test git submodule update --init --recursive git pull --recurse-submodules ``` #### 2. Option A: Install the Python, JS, and system dependencies directly on your machine ```bash # Install ArchiveBox + python dependencies python3 -m venv .venv && source .venv/bin/activate && pip install -e '.[dev]' # or: pipenv install --dev && pipenv shell # Install node dependencies npm install # or archivebox setup # Check to see if anything is missing archivebox --version # install any missing dependencies manually, or use the helper script: ./bin/setup.sh ``` #### 2. Option B: Build the docker container and use that for development instead ```bash # Optional: develop via docker by mounting the code dir into the container # if you edit e.g. ./archivebox/core/models.py on the docker host, runserver # inside the container will reload and pick up your changes docker build . -t archivebox docker run -it \ -v $PWD/data:/data \ archivebox init --setup docker run -it -p 8000:8000 \ -v $PWD/data:/data \ -v $PWD/archivebox:/app/archivebox \ archivebox server 0.0.0.0:8000 --debug --reload # (remove the --reload flag and add the --nothreading flag when profiling with the django debug toolbar) # When using --reload, make sure any files you create can be read by the user in the Docker container, eg with 'chmod a+rX'. ```Common development tasks

See the ./bin/ folder and read the source of the bash scripts within.

You can also run all these in Docker. For more examples see the GitHub Actions CI/CD tests that are run: .github/workflows/*.yaml.

Run in DEBUG mode

Click to expand...

```bash archivebox config --set DEBUG=True # or archivebox server --debug ... ``` https://stackoverflow.com/questions/1074212/how-can-i-see-the-raw-sql-queries-django-is-runningInstall and run a specific GitHub branch

Click to expand...

##### Use a Pre-Built Image If you're looking for the latest `dev` Docker image, it's often available pre-built on Docker Hub, simply pull and use `archivebox/archivebox:dev`. ```bash docker pull archivebox/archivebox:dev docker run archivebox/archivebox:dev version # verify the BUILD_TIME and COMMIT_HASH in the output are recent ``` ##### Build Branch from Source You can also build and run any branch yourself from source, for example to build & use `dev` locally: ```bash # docker-compose.yml: services: archivebox: image: archivebox/archivebox:dev build: 'https://github.com/ArchiveBox/ArchiveBox.git#dev' ... # or with plain Docker: docker build -t archivebox:dev https://github.com/ArchiveBox/ArchiveBox.git#dev docker run -it -v $PWD:/data archivebox:dev init --setup # or with pip: pip install 'git+https://github.com/pirate/ArchiveBox@dev' npm install 'git+https://github.com/ArchiveBox/ArchiveBox.git#dev' archivebox init --setup ```Run the linters

Click to expand...

```bash ./bin/lint.sh ``` (uses `flake8` and `mypy`)Run the integration tests

Click to expand...

```bash ./bin/test.sh ``` (uses `pytest -s`)Make migrations or enter a django shell

Click to expand...

Make sure to run this whenever you change things in `models.py`. ```bash cd archivebox/ ./manage.py makemigrations cd path/to/test/data/ archivebox shell archivebox manage dbshell ``` (uses `pytest -s`) https://stackoverflow.com/questions/1074212/how-can-i-see-the-raw-sql-queries-django-is-runningContributing a new extractor

Click to expand...

ArchiveBox [`extractors`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/extractors/media.py) are external binaries or Python/Node scripts that ArchiveBox runs to archive content on a page. Extractors take the URL of a page to archive, write their output to the filesystem `archive///...`, and return an [`ArchiveResult`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/core/models.py#:~:text=return%20qs-,class%20ArchiveResult,-(models.Model)%3A) entry which is saved to the database (visible on the `Log` page in the UI). *Check out how we added **[`archivebox/extractors/singlefile.py`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/extractors/singlefile.py)** as an example of the process: [Issue #399](https://github.com/ArchiveBox/ArchiveBox/issues/399) + [PR #403](https://github.com/ArchiveBox/ArchiveBox/pull/403).*

**The process to contribute a new extractor is like this:** 1. [Open an issue](https://github.com/ArchiveBox/ArchiveBox/issues/new?assignees=&labels=changes%3A+behavior%2Cstatus%3A+idea+phase&template=feature_request.md&title=Feature+Request%3A+...) with your propsoed implementation (please link to the pages of any new external dependencies you plan on using) 2. Ensure any dependencies needed are easily installable via a package managers like `apt`, `brew`, `pip3`, `npm` (Ideally, prefer to use external programs available via `pip3` or `npm`, however we do support using any binary installable via package manager that exposes a CLI/Python API and writes output to stdout or the filesystem.) 3. Create a new file in [`archivebox/extractors/.py`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/extractors) (copy an existing extractor like [`singlefile.py`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/extractors/singlefile.py) as a template) 4. Add config settings to enable/disable any new dependencies and the extractor as a whole, e.g. `USE_DEPENDENCYNAME`, `SAVE_EXTRACTORNAME`, `EXTRACTORNAME_SOMEOTHEROPTION` in [`archivebox/config.py`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/config.py) 5. Add a preview section to [`archivebox/templates/core/snapshot.html`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/templates/core/snapshot.html) to view the output, and a column to [`archivebox/templates/core/index_row.html`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/archivebox/templates/core/index_row.html) with an icon for your extractor 6. Add an integration test for your extractor in [`tests/test_extractors.py`](https://github.com/ArchiveBox/ArchiveBox/blob/dev/tests/test_extractors.py) 7. [Submit your PR for review!](https://github.com/ArchiveBox/ArchiveBox/blob/dev/.github/CONTRIBUTING.md) 🎉 8. Once merged, please document it in these places and anywhere else you see info about other extractors: - https://github.com/ArchiveBox/ArchiveBox#output-formats - https://github.com/ArchiveBox/ArchiveBox/wiki/Configuration#archive-method-toggles - https://github.com/ArchiveBox/ArchiveBox/wiki/Install#dependencies

Build the docs, pip package, and docker image

Click to expand...

(Normally CI takes care of this, but these scripts can be run to do it manually) ```bash ./bin/build.sh # or individually: ./bin/build_docs.sh ./bin/build_pip.sh ./bin/build_deb.sh ./bin/build_brew.sh ./bin/build_docker.sh ```Roll a release

Click to expand...

(Normally CI takes care of this, but these scripts can be run to do it manually) ```bash ./bin/release.sh # or individually: ./bin/release_docs.sh ./bin/release_pip.sh ./bin/release_deb.sh ./bin/release_brew.sh ./bin/release_docker.sh ```Further Reading

- Home: ArchiveBox.io

- Demo: Demo.ArchiveBox.io

- Docs: Docs.ArchiveBox.io

- Releases: Github.com/ArchiveBox/ArchiveBox/releases

- Wiki: Github.com/ArchiveBox/ArchiveBox/wiki

- Issues: Github.com/ArchiveBox/ArchiveBox/issues

- Discussions: Github.com/ArchiveBox/ArchiveBox/discussions

- Community Chat: Zulip Chat (preferred) or Matrix Chat (old)

- Social Media: Twitter, LinkedIn, YouTube, Alternative.to, Reddit

- Donations: Github.com/ArchiveBox/ArchiveBox/wiki/Donations

This project is maintained mostly in my spare time with the help from generous contributors and Monadical Consulting.

Sponsor this project on GitHub

(网站存档 / 爬虫)

✨ Have spare CPU/disk/bandwidth and want to help the world?

Check out our Good Karma Kit...